Food for Thought: Concurrency and Parallelism

Concurrency is often misunderstood and mistaken for parallelism. However,

concurrency and parallelism are not the same thing. But why should you care?

All you want is to make your Python application fast and responsive.

Which you can achieve by distributing it across many CPUs. What difference does it make whether you call it concurrency or parallelism?

Why should you care?

Turns out, a lot actually. What happens is that you want to make your application fast, so you run it on more processors and... it gets slower. And you think: this is broken, this doesn't make any sense. But what is broken is the understanding of concurrency and parallelism. That's why in this blog post I'll explain the difference between concurrency and parallelism and what's in it for you.

Parallelism is the simultaneous execution of multiple things. These things are possibly related, possibly not. Parallelism is about doing a lot of things at once. It is the act of running multiple computations simultaneously.

Parallel computing can be of different types: bit-level, instruction-level, data and task parallelism. But it is entirely about execution.

Concurrency is the ability to deal with a lot of things at once. It is about the

composition of independently executing things. Concurrency is a way to structure a program by breaking it into pieces that can be executed independently. This involves structuring but also coordinating these pieces, which is communication. Concurrency is the act of managing and running multiple computations at the same time.

For example, an operating system has multiple I/O devices: a keyboard driver,

a mouse driver, a network driver and a screen driver. All these devices are managed by the operating system as independent

things. They are concurrent things. But they are not necessarily

parallel. It does not matter. If you only have one processor, only one process can ever run at a time. So, this is a concurrent model, but it

is not necessarily parallel. It does not need to be parallel, but it is very beneficial for it to be concurrent.

Concurrency is a way to structure things so that you can (maybe) run these things in parallel to do a better or

faster job. But parallelism is not the goal of concurrency, the goal of concurrency

is a good structure. A structure that allows you to scale.

Concurrency and parallelism with Celery and Dask

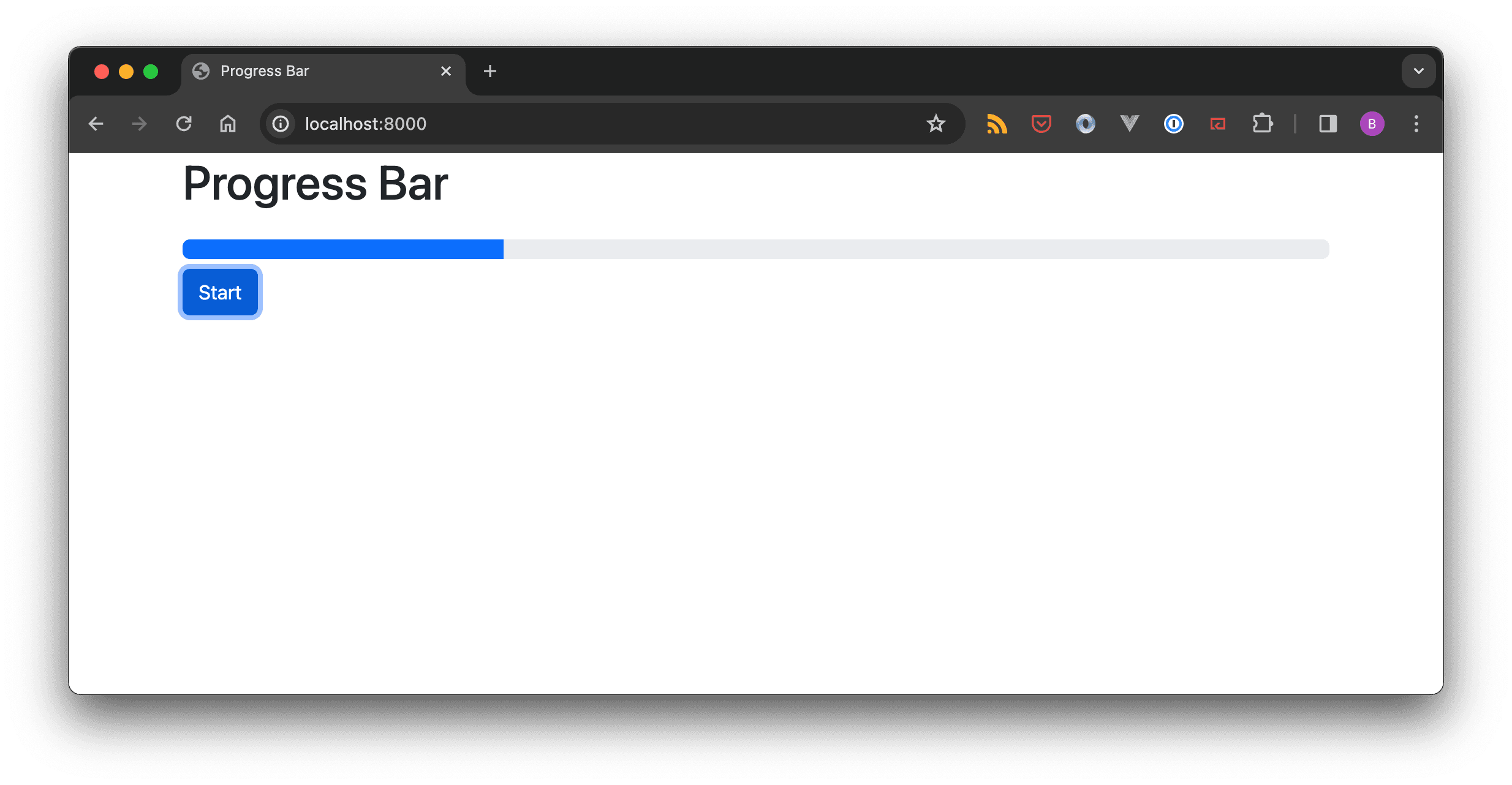

An example where concurrency matters is one of my consulting projects. A hedge fund needed to fetch (a lot of) market data from an external data vendor (Bloomberg). This process would run once a day and write the data to an internal database.

We broke the process down into small pieces: request the data from the Bloomberg web service, poll the web service at

regular intervals to establish whether the data is ready for collection, collect the data, transform the data, and write the data into the database.

We defined the tasks in such a way that each of them can be executed independently.

Which means they can run concurrently. And to run the entire process faster, we made it parallel by scaling up the number of workers (we used Celery). But this could not have happened without concurrency.

An example where parallel computation matters - and concurrency not - is when dealing with large Pandas datasets in a Jupyter notebook. If your

datasets are so large that they do not fit into your computer's memory, you can solve that problem by parallelising the

dataset across a cluster of machines. In this case, you want data parallelism. Concurrency does not matter. And this is a textbook example for Dask.